|

Graphics, Vision & Video |

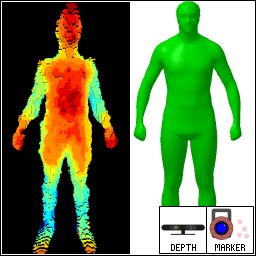

Personalized Depth Tracker Dataset (PDT 13)

|

Reconstructing a three-dimensional representation of human motion in real-time constitutes an important research topic with applications in sports sciences, human-computer-interaction, and the movie industry. This dataset was created to evaluate two different kinds of algorithms. Firstly one can assess the accuracy of methods that estimate the shape of a human from two sequentially taken depth images from a Microsoft Kinect sensor. Secondly one can use the dataset to evaluate the accuracy of depth-based full-body trackers. |

Publications

- If you use this dataset, you are required to cite the following publication.

-

Thomas Helten,

Andreas Baak,

Gaurav Bharaj

Meinard Müller,

Hans-Peter Seidel,

Christian Theobalt

Personalization and Evaluation of a Real-time Depth-based Full Body Tracker

In proceedings of 3DV, 2013

[bib] [pdf] [pdf+] [vid]

Terms of Use

|

The Personalized Depth Tracker Dataset (PDT 13) is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License by MPI Informatik. Any work created using the aforementioned dataset must cite publication [1]. |

Downloads

Shape Estimation

| M1 | M2 | M3 | F1 | F2 | F3 | |

|---|---|---|---|---|---|---|

| Front depth | obj | obj | obj | obj | obj | obj |

| Back depth | obj | obj | obj | obj | obj | obj |

| Estimated shape | obj | obj | obj | obj | obj | obj |

| Ground-truth shape | obj | obj | obj | obj | obj | obj |

Here, M1, M2, M3, F1, F2, F3 represents the six different actors for whom the shape estimation has been conducted. The "front depth" is the segmented point cloud of the front of the person, while the "back depth" is the corresponding point cloud from the person's back. These point clouds were obtained by unprojecting the depth images from the used Microsoft Kinect. The point clouds are saved in the Wavefront OBJ format. Furthermore, the "estimated shape" is the shape obtained using our algorithm applied to the two depth images, while the "ground-truth shape" was obtained by fitting our model to a full-body laser-scan. For privacy reasons, these laser scans can not be made publicly available. The underlying shape model can be obtained here:

Shape Model

| File | |

|---|---|

| Average mesh | obj |

| Eigen vectors | txt |

| Skeleton | txt |

| Joint dependencies | txt |

| Skinning | txt |

In the following, we will give a brief introduction into how to interpret the files and how the final mesh (in a given shape and pose is calculated).

Average mesh:

The average mesh is stored in Wavefront OBJ format and contains 6449 vertices and 12894 triangular faces. The stacked positions of these vertices are denoted by M(0,x0) which is the average shape for a given standard pose x0.

Eigen vectors:

The Eigen vectors are stored as large matrix in human readable text format. The matrix is of size 19347x13 and contains coefficents (13 each) for the X,Y, and Z coordinates of all vertices. The first 6449 rows are the coefficients for X, the next 6449 rows for Y, and the last 6449 rows for Z. The Eigen vector matrix is denoted by E. To obtain a personalized shape M(φ,x0) in the standard pose x0 one must simply multiply the Eigen vector matrix E with φ in R13 and add it to M(0,x0).

Skeleton:

The skeleton file is a custom format and describes joint positions and joint axes compatible to the average shape in standard pose.

Here, <Type> == 0 represents a rotating joint around axis (<AxisX>,<AxisY>,<AxisZ>) and <Type> == 1 means translating joint along axis (<AxisX>,<AxisY>,<AxisZ>). Also, (<OffsetX> <OffsetY> <OffsetZ>) denotes the relative offset to the parent joint in the standard pose. The dofs section describes that some joints are grouped and moved by one degree of freedom simultaneously instead of being moved independently. This is not relevant for tracking and is only mentioned for completeness.

Joint dependencies:

The position offsets (<AxisX>,<AxisY>,<AxisZ>) are in practice not used, because the global positions of the joints in the standard pose are recomputed with respect to a personalized shape M(φ,x0). To this end, the positions of the joints are defined as linear combination of the vertices in M(φ,x0). The resulting skeleton is called personalized skeleton. The corresponding vertices and weights are stored in the joint dependencies file, which is of format:

Skinning:

Finally, linear blend skinning is used in combination with the personalized skeleton to compute the mesh vertex positions M(φ,x) for an arbitrary pose x. The skinning weights and vertices are saved as human readable textfile of the following format.

Pose Estimation

| Actors | Sequences | |||

|---|---|---|---|---|

| D1 | D2 | D3 | D4 | |

| M1 | 7z | 7z | 7z | 7z* |

| M2 | 7z | 7z | 7z | 7z |

| M3 | 7z | 7z | 7z | 7z |

| F1 | 7z* | 7z* | 7z* | 7z* |

| F2 | 7z | 7z | 7z | 7z |

| F3 | - | - | - | - |

The sequences are compressed using 7zip [Homepage]. The file contains all depth images, the ground-thruth joint positions, as well as the ground-truth marker positions used to calculate the ground-thruth joint positions. The sequences were tracked using the estimated shape available above. To visualize or convert the sequence files you can use the Matlab files provided here. Please note that for sequences that are marked with a star the calibration between depth and ground-thruth data is not optimal. However, this global offset does not affect the result when using the error metric described in [1]. Finally, for actor F3 there were no sequences recorded.

If you are interested in the shape model or if you want to download the dataset, please write an email to gvvperfcapeva [at] mpi-inf.mpg.de.

Results

Pose estimation

Here, the averaged joint errors and standard deviations in millimeters are depicted for different trackers.

| Tracker | M1 | M2 | M3 | F1 | F2 | Mean | |||||||||||||||

| D1 | D2 | D3 | D4 | D1 | D2 | D3 | D4 | D1 | D2 | D3 | D4 | D1 | D2 | D3 | D4 | D1 | D2 | D3 | D4 | ||

| Helten3DV2013[1] | 33 (24) | 51 (47) | 71 (83) | 141 (146) | 28 (21) | 49 (57) | 50 (34) | 148 (135) | 029 (26) | 51 (52) | 65 (76) | 128 (143) | 49 (77) | 59 (74) | 102 (116) | 163 (176) | 28 (19) | 37 (26) | 61 (65) | 134 (147) | 74 |

| BaakICCV2011[2] | 41 (24) | 55 (41) | 70 (72) | 150 (140) | 35 (23) | 56 (53) | 54 (37) | 193 (142) | 33 (23) | 57 (55) | 70 (78) | 137 (145) | 54 (63) | 62 (65) | 141 (295) | 182 (228) | 29 (20) | 43 (35) | 65 (69) | 123 (114) | 83 |

| Kinect SDK | 59 (85) | 86 (157) | 138 (157) | 160 (163) | 38 (48) | 67 (98) | 81 (87) | 183 (154) | 43 (50) | 85 (114) | 87 (108) | 133 (149) | 54 (73) | 98 (123) | 112 (117) | 187 (184) | 36 (37) | 58 (81) | 79 (98) | 130 (147) | 96 |

-

[1] Thomas Helten,

Meinard Müller,

Hans-Peter Seidel,

Christian Theobalt

Real-time Body Tracking with One Depth Camera and Inertial Sensors

Proceedings of the International Conference on Computer Vision (ICCV), 2013.

- [2] Andreas Baak,

Meinard Müller,

Gaurav Bharaj,

Hans-Peter Seidel,

Christian Theobalt

A Data-Driven Approach for Real-Time Full Body Pose Reconstruction from a Depth Camera

IEEE International Conference on Computer Vision (ICCV), 2011